The following is a series of exchanges by members of the Business Physics AI Lab Team: Thomas Hormaza Dow, Vinay Kumar, Hichem Benzair, Aboubakar Samake, Ann Lockquell as well as our AI Agents, Charlie and Lena.

How the Business Physics AI Lab preserves judgment in human–AI software development

Many teams are now using AI to generate code, suggest fixes, refactor functions, draft documentation, and speed up delivery. The productivity gains are real. But so is the risk. The faster the interaction between human and AI becomes, the easier it is for the reasoning behind the work to disappear. A prompt is tried. A suggestion is accepted. A feature evolves. The code ships. Yet the logic behind the decisions can fade almost as quickly as the work moves forward.

For us, that is not just a documentation issue, it is a professional practice in how we run AI Simulations in our lab.

At the Business Physics AI Lab, our concern is not only whether code works. It is whether the judgment behind the code remains visible enough to review, compare, and improve. We want to know why a path was chosen, what evidence made it trustworthy, what the engineer overrode, what constraints shaped the decision, what tradeoffs were accepted, and what the team learned from the process.

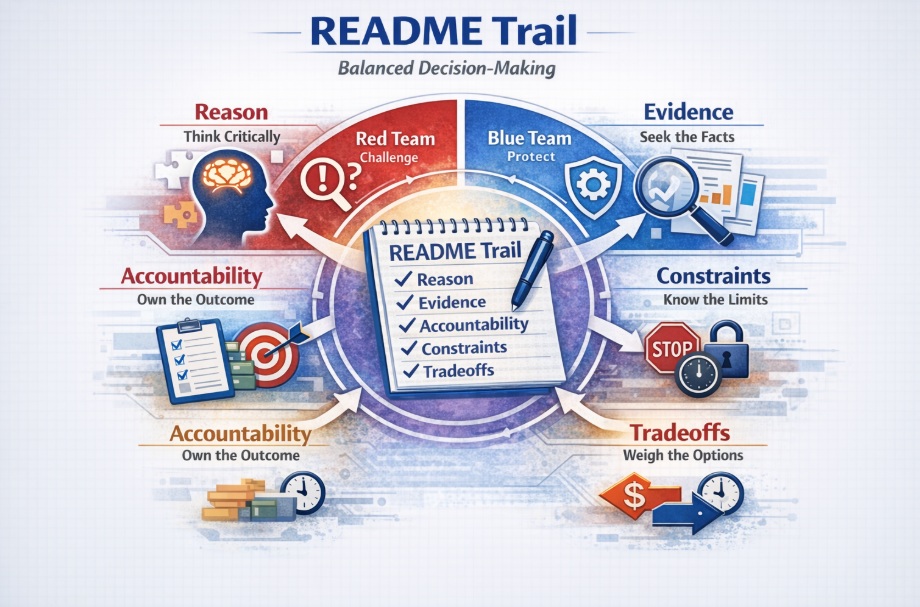

That is why we propose teamwork with what we call the Purple Team Trail.

The Purple Team Trail is our way of preserving the judgment trail in human–AI software development. It gives structure to fast-moving work so that human and AI contributions remain visible over time. It helps us capture reasoning while it is still fresh, compare perspectives across the lifecycle of the work, and turn delivery into learning rather than letting it disappear into a finished artifact.

At a practical level, the Red Team looks for weaknesses. It challenges assumptions, tests whether confidence is justified, and asks where things could fail. The Blue Team focuses on protection and stability. It looks at what must work reliably, what needs safeguards, and what the team must be ready to support in real use. The Purple Team connects both sides. It helps compare what was challenged, what was protected, and what was learned. In our lab, that role goes further: the Purple Team helps preserve the judgment trail so that human–AI complementarity becomes visible, reviewable, and useful over time.

The Business Physics AI Lab uses AI agents as part of its operating model. This helps the lab scale its work through a combination of human and AI contributions. Even so, humans remain in control at all times. Within this human–AI complementarity model, every resource produces a README block to preserve the judgment trail, clarify roles, and make decisions reviewable. Human oversight is maintained throughout the process, and a human remains in the loop across all activities.

Why we built this approach

The Business Physics AI Lab exists to understand and improve how humans and intelligent systems work together. That means we are interested not only in outputs, but in the forces behind those outputs: motivation, friction, feedback, trust, adaptation, and decision quality.

Software development is one of the clearest places where those forces now show up.

AI can help a developer move faster, explore more options, and reduce repetitive effort. But speed on its own is not enough. In fact, speed can create a new kind of friction: the friction of disappearing reasoning. The code may look polished, but the path behind it may be unclear. That weakens knowledge sharing, makes onboarding harder, narrows code reviews, and reduces the organization’s ability to learn from what it builds.

In other words, the code may be visible, while the professional judgment behind it becomes invisible.

That is the problem we wanted to solve.

We needed a way to preserve judgment without creating a heavy process. We needed a way to make human–AI interaction visible enough to support reflection, comparison, and accountability. And we needed something that smaller teams, research groups, and agile working environments could actually use.

That is why the Purple Team Trail sits at the center of how we operate.

“What makes the Purple Team Trail especially valuable is that it helps protect the CIA triad — confidentiality, integrity, and availability — by making important decisions visible, challengeable, and structured throughout the workflow, so security issues can be caught earlier rather than discovered later.” – Hichem Benzair

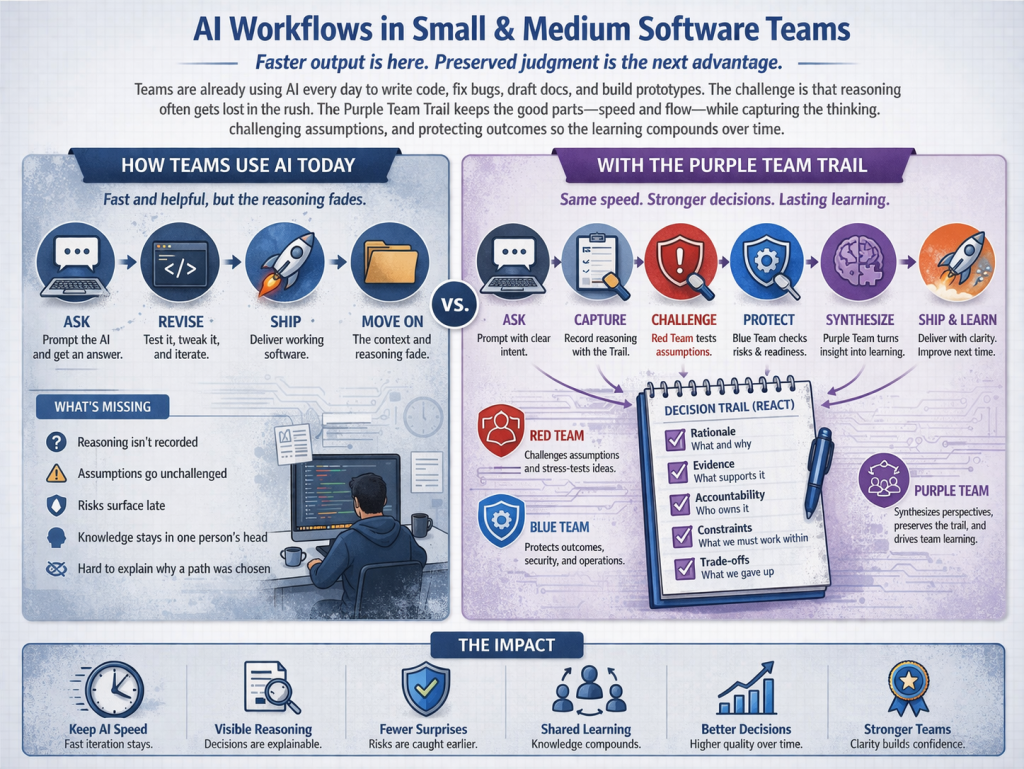

How many smaller software teams use AI today

Many small and medium-sized software teams are already using AI in practical ways. Engineers use it to generate code, refactor functions, draft tests, summarize requirements, produce documentation, accelerate prototypes, and explore technical options. In that sense, AI is already part of the daily workflow. The problem is not that teams are failing to use AI. The problem is that this use often remains fast, individual, and only lightly documented. The output is saved, but the reasoning behind it is often not. That is the gap the Purple Team Trail is designed to close.

Why the Purple Team matters

In many organizations, the Purple Team is described as a bridge between Red Team and Blue Team thinking. In our lab, that idea is useful, but incomplete.

For us, the Purple Team is the steward of the judgment trail.

That is what makes it central.

The Red Team helps expose weak assumptions, false confidence, and areas where output may look stronger than the reasoning behind it. The Blue Team helps protect what must hold in real operation: stability, safeguards, accountability, and practical resilience. The Purple Team receives those perspectives, compares them, and turns them into a form of structured learning.

This matters because human–AI development does not just produce code. It produces decisions. And decisions are where professional practice either matures or weakens.

When the Purple Team preserves the trail of those decisions, the lab can do more than deliver a result. It can see how that result emerged, where human judgment proved decisive, where AI genuinely helped, and what must change next time.

That is why the Purple Team Trail is not a side process for us. It is part of how we protect the integrity of our work.

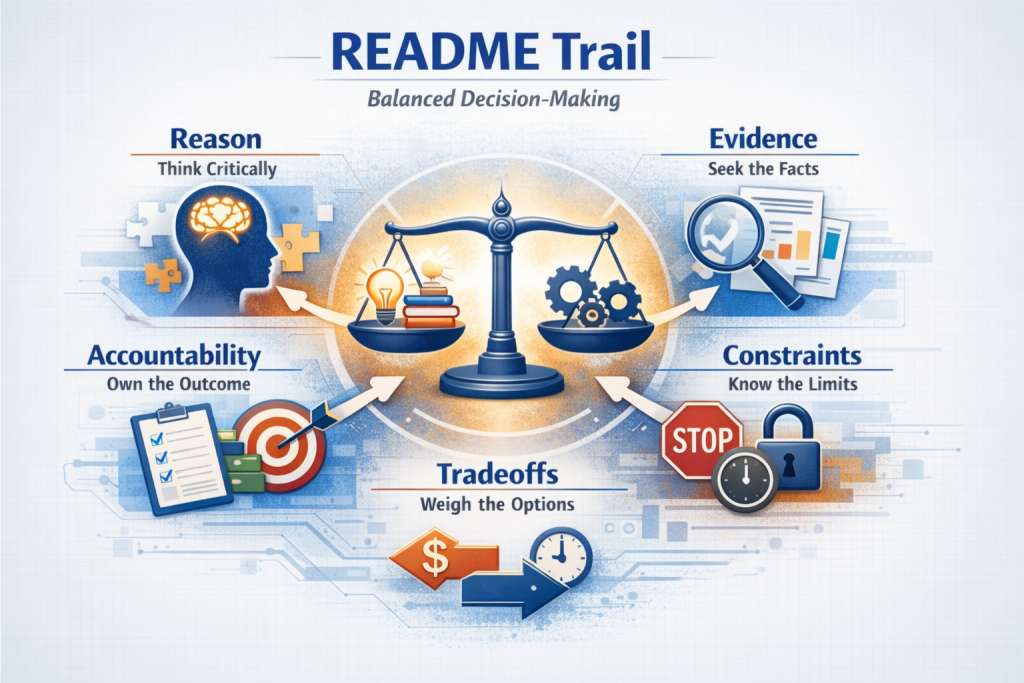

The REACT framework gives the trail its structure

To make the Purple Team Trail usable, we needed a disciplined but lightweight framework. That is where REACT became essential.

At the Business Physics AI Lab, REACT helps us preserve the level of reasoning that matters most:

Reason asks why AI was used for a task in the first place.

Evidence asks what checks, tests, or validation made the result trustworthy.

Accountability asks who approved the final outcome and who owns the decision.

Constraints ask what rules, boundaries, or practical limits shaped the work.

Tradeoffs ask what was optimized and what costs were knowingly accepted.

This structure matters because it prevents AI-assisted development from turning into a blur of fast choices with no clear logic left behind.

REACT does not require us to document every keystroke or every prompt variation. It asks something more useful: preserve the reasoning that explains why the work should be trusted.

Why this path?

Why trust it?

Who owns it?

What shaped it?

What did it cost?

Those questions are fundamental to how we work.

Reflection is not extra paperwork

At the Business Physics AI Lab, we treat the professional practice reflection journal as part of the work itself, not as an academic exercise added after the fact.

Its role is simple: to make the thinking behind the work visible enough to understand, compare, and improve.

That helps individual contributors reflect on how they used AI, where they exercised judgment, and what they would change next time. It helps the lab preserve shared memory across projects. And it helps us maintain accountability in situations where AI can otherwise make the division of labor between human and machine feel blurry.

The reflection journal matters because it turns invisible problem-solving into visible professional practice.

That fits naturally with our broader work in Business Physics. We are interested in how systems learn, where friction appears, how trust is built, and how better feedback loops improve performance over time. A reflection journal is one of the simplest ways to make those dynamics visible in software work.

“Good design is not only about what people see at the end. It is also about the reasoning that shapes what gets built. AI can accelerate the output, but teams still need a way to preserve the judgment behind the work.” – Ann Lockquell

The README block is where this becomes operational

We also knew that if this approach was going to work, it had to live close to the delivery process. That is why we use a compact README block as the operational form of the judgment trail.

It can be attached to a pull request, a feature branch, a sprint artifact, or a final delivery package. It keeps the reasoning close to the code, rather than pushing it into a disconnected document.

A typical block may include:

- Purpose

- Inputs (sources, prompt/config link)

- Checks run

- Human ↔ AI roles (handoff, overrides)

- Trade-offs chosen

- Human value add

- Learning and next actions

This block is intentionally small. It is not meant to slow the work down. It is meant to preserve what would otherwise disappear.

Over time, it does something even more valuable: it gives the lab a way to compare patterns across tasks. We can see where AI genuinely helped, where it created false confidence, where human judgment corrected the course, and what kinds of tradeoffs appear repeatedly.

So the README block is not just a note. It is part of the lab’s learning infrastructure.

Formative and summative use

The Purple Team Trail works best when it is used across the lifecycle of the work, not just at the end.

That is why we think in both formative and summative terms.

During development, short formative README notes help capture reasoning while the work is still moving. They record what was attempted, how AI was used, what checks were run, what changed, and what concerns remain. These notes are useful precisely because they are close to the moment of decision.

At the end of a task or feature, a summative README brings the process together. It explains the final direction, the main tradeoffs, the role AI played, what was challenged, what was protected, and what the team should carry forward.

The Purple Team is central here. It gathers the formative inputs, compares Red Team and Blue Team perspectives, and synthesizes the final judgment trail into a usable closing record.

A simple example makes the process clear. A lab team uses AI to accelerate the development of a feature. During the work, the Red Team notes that an assumption has not been tested well enough under edge conditions. The Blue Team points out that the feature may be stable in normal use but still lacks enough monitoring for real-world support. The Purple Team preserves both views in the evolving README trail, then synthesizes them at the end: what AI contributed, what humans decided, what was challenged, what was protected, and what the team should do differently next time. The final result is not only delivered code. It is delivered code with preserved reasoning.

That is exactly the kind of learning loop we want.

“The real risk in AI-assisted development is often not the model. It is the absence of clear requirements, sound task breakdown, and early architectural intent. AI can generate promising output quickly, but human judgment is still what creates clarity, structures the work, and keeps the team in control.” – Vinay Kumar

Purple Team Strengthens the Workflow

| How many smaller software teams use AI today | How the Purple Team Trail strengthens that workflow |

| AI is often used informally inside the daily workflow. | AI remains part of the daily workflow, but its use becomes more visible and structured. |

| Engineers prompt, test, revise, and ship quickly. | Engineers still prompt, test, revise, and ship, but they also preserve the reasoning behind key decisions. |

| Code generation, refactoring, debugging, documentation, and prototyping are accelerated. | Those same activities are accelerated, but the judgment behind them is captured and reviewed. |

| The output is usually saved. The reasoning behind it is often not. | The output is saved, and the reasoning behind it is preserved through the README Trail and REACT structure. |

| AI use often stays at the individual level. | AI use becomes easier to share, review, and learn from across the team. |

| Prompts and model outputs may influence decisions without leaving a clear trail. | Important choices are documented through Reason, Evidence, Accountability, Constraints, and Tradeoffs. |

| Code reviews often focus mainly on the final artifact. | Reviews can consider both the artifact and the judgment trail behind it. |

| Teams may move quickly but struggle to explain later why a path was chosen. | Teams move quickly while keeping a usable record of why decisions were made. |

| Weak assumptions or hidden risks may only surface late. | Red Team thinking helps challenge assumptions earlier. |

| Operational concerns may remain implicit until deployment pressure increases. | Blue Team thinking helps make protection, stability, and operational readiness more explicit. |

| Learning often stays trapped in one engineer’s memory or scattered notes. | Purple Team thinking helps compare perspectives, preserve learning, and synthesize what the team should carry forward. |

| AI can scale output, but understanding may remain uneven. | AI still scales output, but the workflow is designed to strengthen shared understanding and accountability. |

| Smaller teams may feel they lack the resources for formal learning processes. | Smaller teams gain a lightweight way to capture learning without needing a large enterprise structure. |

| Documentation can feel separate from delivery. | The README Trail becomes part of delivery itself. |

| “Done” often means the code works. | “Done” means the code works and the reasoning behind it is visible enough to review, compare, and learn from. |

Why this improves our professional practice

This approach strengthens how we work in several ways.

It improves knowledge sharing because reasoning no longer disappears into one person’s memory.

It improves collaboration because people can see not only what was built, but how important decisions were made.

It improves accountability because the human role and the AI role are both made visible.

It improves consistency because the lab develops a shared structure for explaining AI-assisted work.

It improves learning because different approaches can be compared rather than treated as black boxes.

And it improves professional maturity because delivery includes visible judgment, not just finished output.

Most importantly, it helps us practice human–AI complementarity in a disciplined way. We do not want AI use in the lab to remain informal, invisible, or idiosyncratic. We want it to become a visible and improvable way of working.

“The question I always come back to is not whether the output is good. It’s whether I can still explain why I made the call. The Purple Team Trail makes that visible.” – Aboubakar Samake

Strong standard for human–AI work

At the Business Physics AI Lab, the Purple Team Trail changes what “done” means.

Done no longer means only that the code works.

Done means the code works and the reasoning behind it is visible enough to review, compare, and learn from.

That is a stronger standard, and it aligns with how we think about system quality more broadly. In Business Physics terms, stronger performance does not come only from faster motion. It comes from better feedback, lower hidden friction, more reliable trust, and better alignment between people, tools, and decisions.

The Purple Team Trail supports exactly that.

As AI becomes more embedded in software development, the teams that mature fastest will not simply be the ones that generate code faster. They will be the ones that preserve judgment while moving quickly.

That is the standard we are trying to build.

“The teams that will mature fastest in AI-assisted development are not simply the ones that generate code faster. They are the ones that preserve judgment while moving quickly and make that reasoning visible enough to improve over time.” – Thomas Hormaza Dow

Conclusion

At the Business Physics AI Lab, we see human–AI software development as a question of professional practice, not only technical output.

That is why the Purple Team Trail matters to us. With the Purple Team at the center, the REACT framework providing structure, the reflection journal preserving reasoning, and the README block embedding it into delivery, we have a practical way to keep the judgment trail visible throughout the work.

The Red Team challenges assumptions.

The Blue Team protects outcomes.

The Purple Team preserves the trail of judgment.

That trail is what allows us to do more than ship code. It allows us to strengthen learning, accountability, and human–AI complementarity at the same time.

Leave a Reply